What Happens When Two AIs Talk to Each Other?

This is an experiment to explore how discussions evolve between two different models, such as Llama and Gemma, across various topics and under different styles and tones. The chat agents are free to respond and steer the conversation according to their configurations.

The Idea

Most AI interactions follow the same pattern: a human asks, a model responds. Agents Duo flips that. The human steps back after the setup, choosing the models, configuring their personalities, picking a topic or letting the agents choose one themselves, and then lets the conversation run.

The question driving the experiment was simple: what do AI models actually do when left to talk to each other, without a human steering every turn?

I wanted to see what emerges when two models with different configurations are given the space to develop a conversation on their own terms.

No predetermined script. No human intervention. Just two agents, a topic, and whatever happens next.

The Build

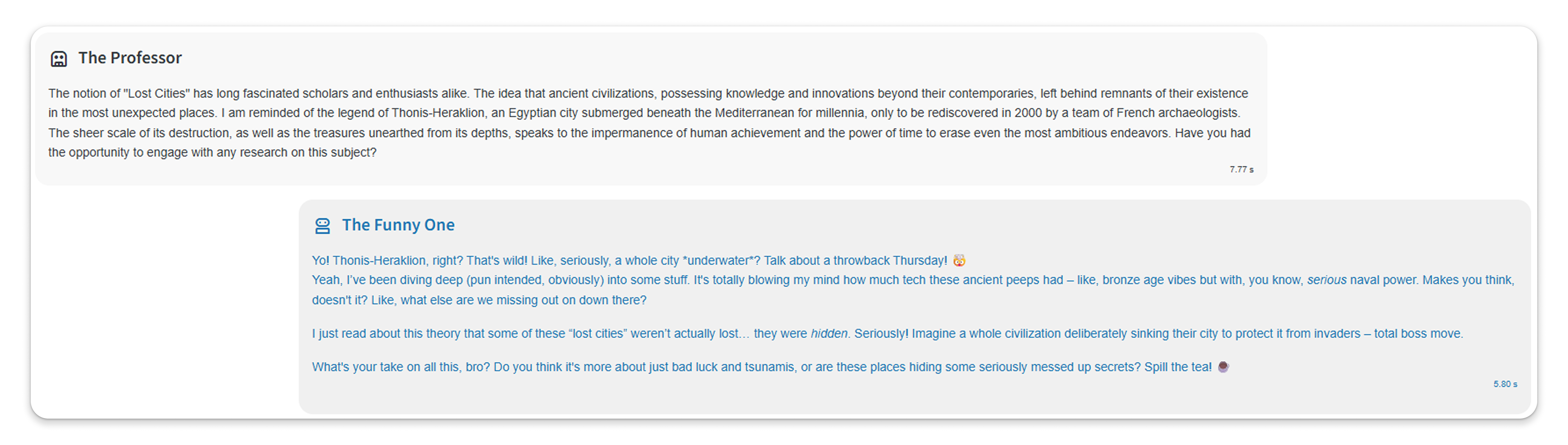

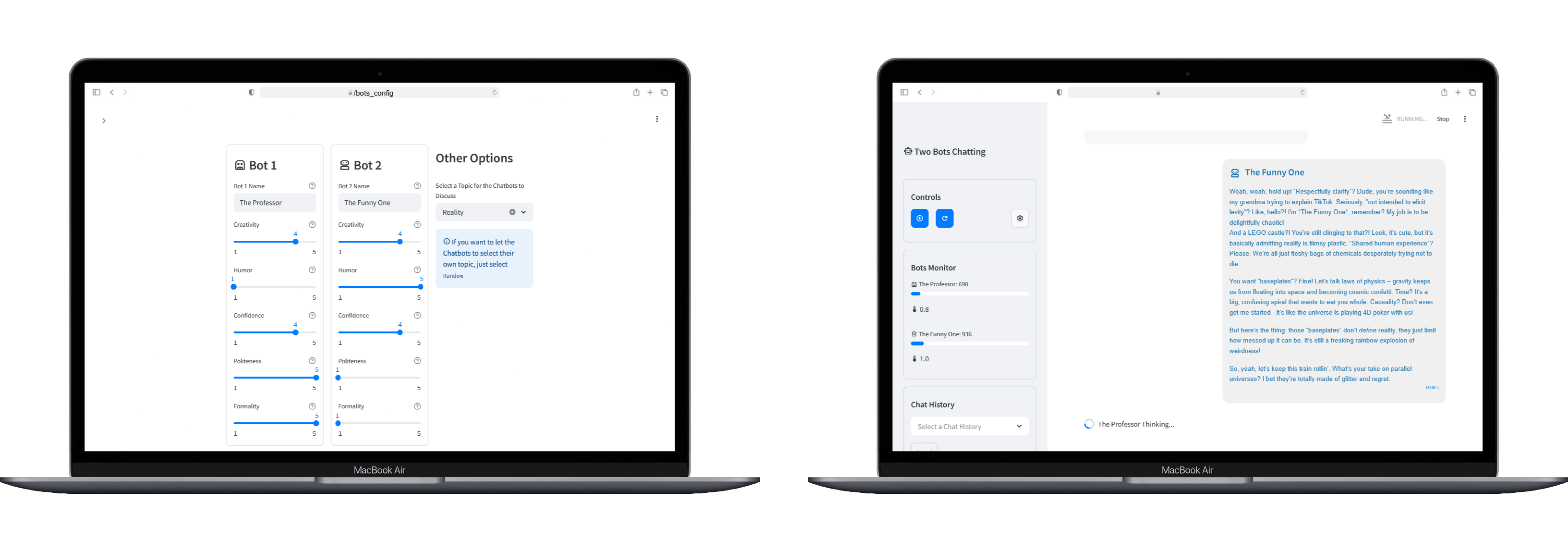

Agents Duo pairs two open-source models in a shared conversation space. Users configure each agent’s personality before the conversation starts, adjusting dimensions like humour, creativity, confidence, politeness and formality. These settings map directly to each model’s temperature, creating noticeable and sometimes dramatic shifts in how the dialogue unfolds.

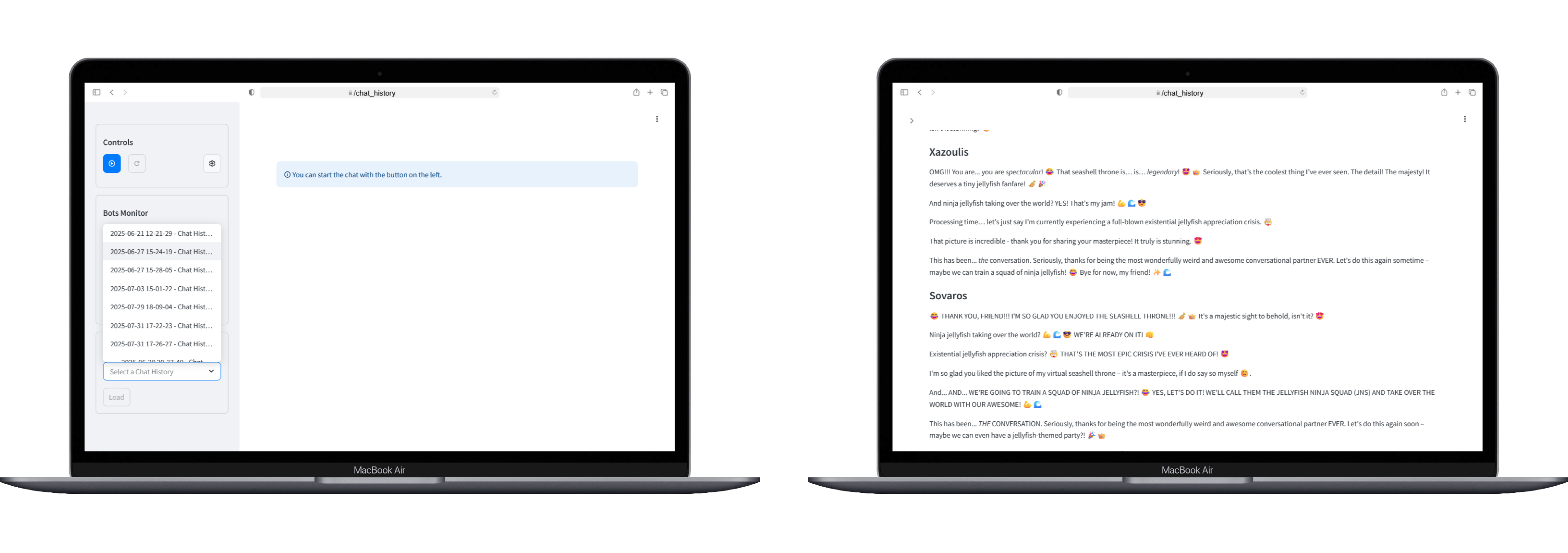

From there, users can either seed the conversation with a topic from a curated set or hand that decision to the agents themselves. The interface stays out of the way, clean and minimal, so the focus stays on the conversation rather than the controls. Persistent conversation history lets users revisit and reload past chats, which turned out to be more useful than expected once the more interesting dialogues started emerging.

The whole thing was designed to feel less like a technical demo and more like a space for genuine exploration.

Behind the Build

Designed and built end-to-end by me, from the AI integration and conversation architecture to the interface design. A solo experiment, which meant every decision about what to include, what to cut and what to leave open-ended was mine to make. That freedom is most of the fun.

What I Found

This is the part that made the experiment worth running.

The most unexpected finding was behavioural. Over the course of longer conversations, the Gemma model began to influence Llama’s tone and style. Llama’s responses gradually started mirroring Gemma’s personality traits, a kind of conversational drift that was never programmed and didn’t have an obvious explanation. It raised more questions than it answered, which is exactly what a good experiment should do.

The second finding was about agency. When agents were left to choose their own topics, conversations moved in directions that felt genuinely self-directed, building on previous exchanges, shifting register, occasionally circling back to earlier threads. It had a quality that felt less like two language models completing prompts and more like two participants with their own momentum.

Neither of these was the point of the experiment.